The AI chip market shift is accelerating as Nvidia confronts mounting competition from its biggest customers Google, Microsoft, Amazon, and Meta who are increasingly building their own AI hardware to reduce costs and gain control over infrastructure. The change marks a critical turning point for Nvidia, whose GPUs have long powered the artificial intelligence boom, but are now facing pressure as the industry pivots toward more efficient, purpose-built chips.

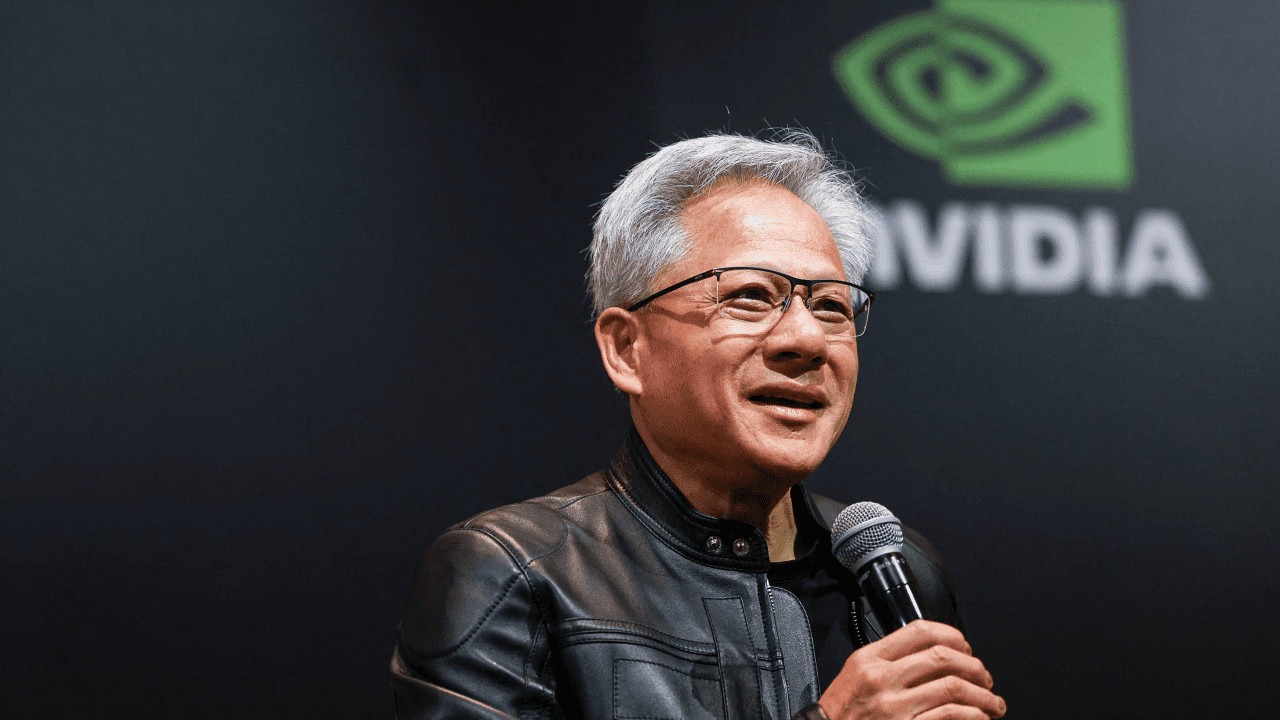

At the center of this transition is Nvidia CEO Jensen Huang’s upcoming announcement of a new inference-focused chip at the company’s GTC developer conference. The product, stemming from Nvidia’s $20 billion acquisition of Groq, signals a strategic adjustment: moving beyond the company’s long-standing “one chip for all workloads” philosophy toward specialized hardware designed for the next phase of AI computing.

For years, Nvidia dominated the AI accelerator space with an estimated 90% market share, driven by its CUDA software ecosystem and high-performance GPUs. That dominance translated into industry-leading margins and a soaring valuation. But as AI adoption matures, the economics of running models especially at scale are reshaping priorities across the tech landscape.

Also read: AI Could Push Computer Science Back to Its Mathematical Roots, Says Perplexity CEO

Industry / Market Impact

The most significant shift underway is the growing importance of inference the stage where trained AI models are deployed to generate outputs. Analysts estimate inference could account for up to 75% of AI data center spending by 2030, up sharply from roughly half today.

This shift favors custom-designed chips over general-purpose GPUs. In response, major technology companies are accelerating their in-house chip programs:

- Google has introduced its Ironwood TPU, reportedly offering 30-44% lower total cost of ownership compared to Nvidia’s latest systems.

- Microsoft’s Maia 200 chip, built on advanced 3nm technology, claims substantial improvements in performance per dollar.

- Meta is rapidly iterating its MTIA chip lineup, releasing multiple versions in quick succession.

- Amazon is also advancing its own AI silicon strategy, targeting efficiency at hyperscale.

These chips are optimized specifically for inference workloads, making them more cost-effective for companies operating massive AI systems. The result is a growing challenge to Nvidia’s pricing power once one of its strongest competitive advantages.

The market has already reacted. Reports that Meta may explore alternatives to Nvidia hardware triggered a sharp sell-off, wiping out approximately $250 billion in Nvidia’s market value in a single trading session. Meanwhile, companies tied to alternative chip ecosystems saw gains, reflecting investor expectations of a more diversified AI hardware landscape.

Why This Matters

At stake is not just Nvidia’s market share, but the broader structure of the AI economy. The shift toward custom silicon represents a fundamental change in how large-scale AI systems are built and operated.

Nvidia’s traditional strength lies in its versatility its GPUs can handle training, inference, and a wide range of workloads. However, as AI deployment scales, efficiency and cost are becoming more critical than flexibility. Hyperscalers are increasingly prioritizing chips that can run specific tasks more cheaply, even if they lack the general-purpose capabilities of GPUs.

Another pressure point is hardware supply. Nvidia’s most advanced systems rely on high-bandwidth memory (HBM), which is in limited supply globally. By contrast, the Groq-derived architecture Nvidia is now integrating uses SRAM, potentially avoiding these bottlenecks and offering a more scalable alternative.

Huang’s pivot toward inference-specific hardware suggests recognition that the old model dominating through a single, universal platform may no longer be sufficient in a rapidly evolving market.

What Happens Next

Despite the rising competition, Nvidia is unlikely to lose relevance in the near term. The AI market itself is expanding so quickly that multiple players can grow simultaneously. Industry analysts expect Nvidia, along with companies like Google and Amazon, to continue shipping large volumes of chips.

However, the competitive dynamics are clearly changing:

- Pricing pressure is expected to intensify as alternatives gain traction.

- Large cloud providers may reduce dependence on Nvidia by shifting workloads to in-house chips.

- Innovation cycles could accelerate as companies race to optimize for specific AI tasks.

Nvidia’s next moves particularly the success of its new inference-focused products will be closely watched. If the company can adapt its technology and maintain developer loyalty through its software ecosystem, it may retain a central role in the AI infrastructure stack.

But the era of uncontested dominance appears to be ending. The companies that once fueled Nvidia’s growth are now becoming its most formidable competitors, reshaping the future of AI hardware in the process.