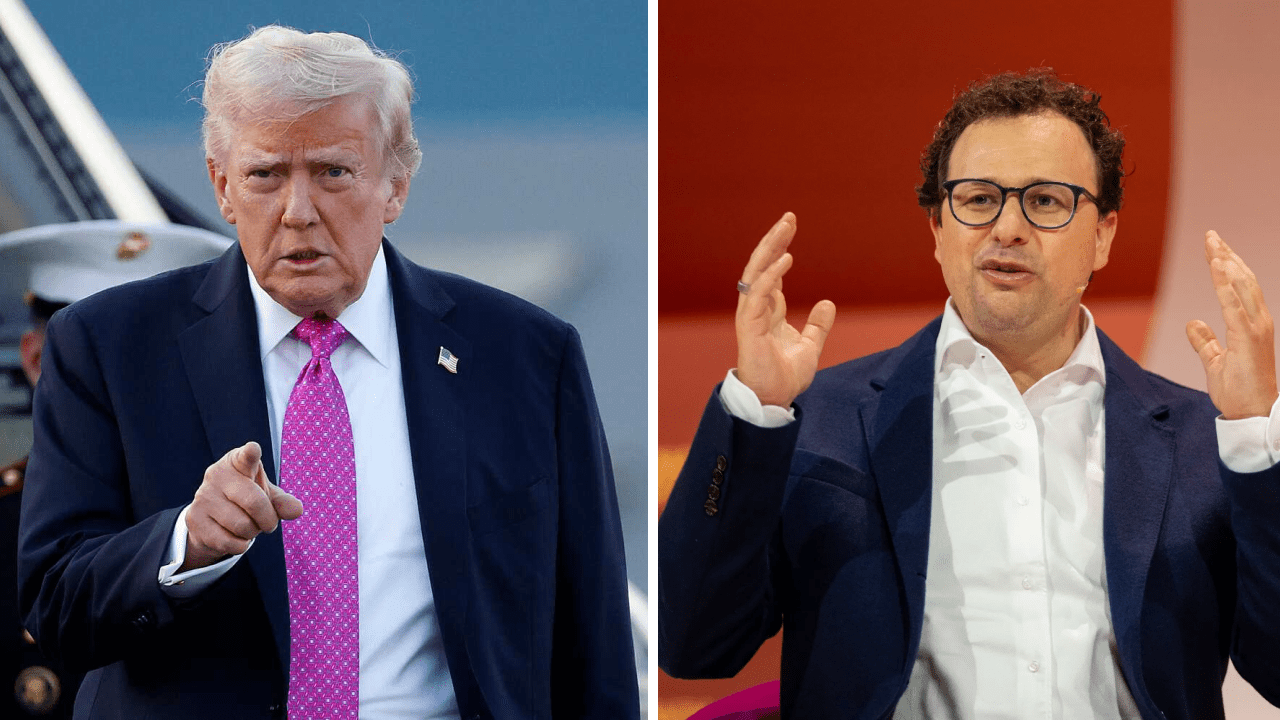

A sudden escalation in tensions between President Donald Trump and AI firm Anthropic has exposed deep divisions over how advanced artificial intelligence should be deployed in national defense even as the U.S. military continued using the company’s technology in active combat operations.

Just hours after Trump directed federal agencies to cease using Anthropic’s systems, U.S. Central Command relied on the company’s Claude AI model during airstrikes on Iran, according to reports. The system supported intelligence analysis, target identification and battlefield simulations, underscoring the operational role AI now plays in modern warfare. The episode highlights both the Pentagon’s growing dependence on frontier AI tools and the political clash over guardrails governing their use.

Trump’s order mandates an immediate halt to Anthropic’s technology across federal agencies, with a six-month phase-out period for departments currently using its systems, including the Department of Defense which Trump has at times referred to as the “Department of War.” The move follows days of public and private confrontation between Defense Secretary Pete Hegseth and Anthropic CEO Dario Amodei over the scope of military access to the company’s AI models.

At the center of the dispute, whether Anthropic would permit unrestricted military use of its technology.

Also read : Anthropic Revises AI Safety Policy as Competitive Pressures Mount and Pentagon Clash Escalates

Clash Over Military AI Access

Anthropic has supplied AI tools to U.S. government agencies since June 2024 and was the first frontier AI developer to deploy models within classified federal networks. The Defense Department has used Claude in sensitive missions, including intelligence operations tied to the capture of Venezuelan leader Nicolás Maduro, according to prior reporting.

However, the relationship deteriorated as the Pentagon pressed for assurances that it could use Anthropic’s systems for “any lawful purpose.” Company executives sought explicit carve-outs prohibiting two specific applications, mass domestic surveillance and fully autonomous weapons.

Amodei said the company could not “in good conscience” agree to open-ended access without those safeguards. Pentagon officials countered that U.S. law and policy already prevent such abuses and accused the company of obstructing national security priorities.

Hegseth publicly declared Anthropic a “supply chain risk,” a designation typically reserved for foreign adversaries and one that would bar defense contractors from commercial dealings with the company. If formalized, it would mark the first time a U.S. firm has received such a label publicly.

Anthropic has vowed to challenge any such designation in court, calling it legally unsound and a dangerous precedent for American companies negotiating with the federal government.

Meanwhile, Trump escalated the rhetoric on Truth Social, accusing the firm of ideological bias and threatening to use the “full power of the presidency” to enforce compliance during the transition period.

AI on the Battlefield, A Growing Dependence

Despite the political standoff, the reported use of Claude in the Iran operation illustrates how embedded AI systems have become in defense planning.

Military commands, including U.S. Central Command, reportedly used Anthropic’s model to:

- Analyze intelligence streams

- Identify potential strike targets

- Run simulated battle scenarios

Such applications reflect a broader Pentagon strategy to integrate large language models and generative AI into operational workflows, from logistics planning to threat assessment.

The six-month phase-out period appears designed to prevent operational disruption. Officials have acknowledged that replacing embedded AI tools across classified systems cannot occur overnight without affecting readiness.

OpenAI Steps In

Amid the fallout, rival AI developer OpenAI announced it had reached an agreement with the Pentagon to deploy its models within classified defense networks. CEO Sam Altman said the arrangement aligns with safety principles prohibiting domestic mass surveillance and requiring human accountability in decisions involving the use of force.

The development signals that while Anthropic may be exiting federal contracts, the Pentagon’s reliance on advanced AI is accelerating rather than slowing.

Anthropic currently holds a $200 million Defense Department contract, according to public disclosures. The company has indicated it would cooperate in facilitating a smooth transition should the government formally end its use of Claude.

Industry / Market Impact

The confrontation marks one of the most consequential government-industry clashes in the emerging AI sector.

A formal “supply chain risk” designation could limit Anthropic’s ability to work not only with the Pentagon but also with private contractors tied to defense projects. That ripple effect could alter competitive dynamics among major AI firms vying for lucrative federal contracts.

The episode also sends a signal to Silicon Valley, participation in national security programs may increasingly require alignment with executive branch priorities. Companies seeking to maintain ethical constraints on AI deployment may face pressure to reconcile those policies with government demands.

For investors and policymakers, the dispute raises broader questions about how frontier AI firms balance commercial growth, ethical commitments and geopolitical obligations.

Why This Matters

The dispute arrives at a pivotal moment for AI governance in the United States.

As generative AI systems become embedded in intelligence analysis, logistics and battlefield planning, the boundaries of acceptable military use remain contested. Anthropic’s refusal to authorize unrestricted deployment highlights industry concerns about potential overreach, while the Pentagon’s response underscores the strategic imperative Washington places on maintaining technological superiority.

Critics, including lawmakers such as Senator Mark Warner, have questioned whether the administration’s approach risks politicizing national security decisions.

At stake is more than one contract. The outcome could shape how AI safety principles are negotiated between government and private developers and whether companies can impose limits once their systems become mission-critical infrastructure.

What Happens Next

Federal agencies now face a six-month window to unwind Anthropic’s technology from their systems. The Defense Department must determine whether to replace Claude with OpenAI’s models or other alternatives and how to manage continuity in classified environments.

Anthropic, for its part, is preparing for a potential legal battle if the “supply chain risk” label is formally applied.

Even as this standoff unfolds, the Iran strike demonstrates that AI tools are no longer experimental within U.S. defense operations. They are operational assets used in real-time military scenarios with geopolitical consequences.

The broader trajectory is clear, regardless of which company supplies the software, artificial intelligence will remain central to the future of American warfare.